JBizNews Desk | April 22, 2026

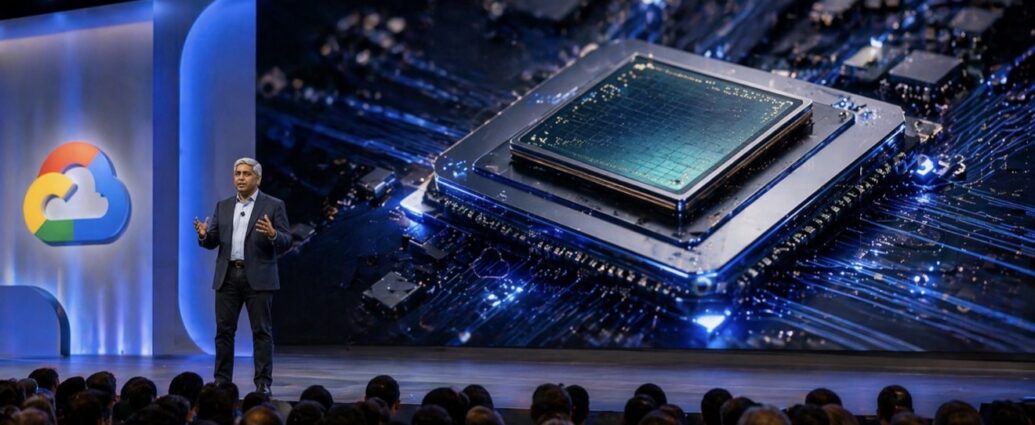

Google Cloud CEO Thomas Kurian opened the company’s annual Cloud Next conference in Las Vegas on Wednesday with an aggressive push into enterprise artificial intelligence, unveiling a new generation of custom AI chips alongside a broad suite of tools designed to help businesses build and deploy autonomous AI agents at scale.

Speaking at the Mandalay Bay Convention Center, Kurian framed the announcements as a turning point in Google’s AI strategy—from research leadership to full-scale enterprise execution—highlighting upgrades to its Gemini models, new agent-building infrastructure, and significant advances in proprietary silicon.

At the center of the rollout is Google’s seventh-generation Tensor Processing Unit (TPU), codenamed Ironwood, which the company says delivers up to a fourfold performance increase for high-volume, low-latency AI inference workloads. The chip is a core component of Google’s AI Hypercomputer, a tightly integrated hardware and software system designed to support the massive computational demands of modern AI models.

Jeff Dean, Chief Scientist at Google, emphasized the strategic shift toward specialized computing. “As demand grows for quickly processing AI queries, it now becomes sensible to specialize chips more for training or more for inference workloads,” Dean said, underscoring a broader industry move toward separating how AI models are built versus how they are deployed at scale.

Analysts say the new architecture reflects a deeper transformation in how cloud infrastructure is being designed. Holger Mueller, Vice President and Principal Analyst at Constellation Research, noted that “enterprise AI is moving from batch processing to persistent, always-on workloads,” requiring fundamentally different systems optimized for continuous inference rather than periodic training cycles.

The commercial implications are already materializing. Dario Amodei, CEO of Anthropic, confirmed the company is expanding its use of Google Cloud infrastructure, building on a previously announced agreement that could provide access to up to one million TPU chips by 2026—one of the largest AI compute commitments disclosed to date. The deal highlights how even leading AI model developers are increasingly relying on hyperscaler infrastructure to meet growing demand.

Beyond hardware, Google is placing a significant bet on what it calls the “agentic enterprise.” The company introduced new tools enabling businesses to build AI agents capable of executing multi-step tasks autonomously—ranging from logistics coordination to customer service resolution—rather than simply responding to prompts.

Amin Vahdat, Vice President and General Manager of Systems and Services Infrastructure at Google Cloud, said the goal is to provide “a full-stack platform where enterprises can deploy, manage, and scale AI agents reliably,” positioning Google Cloud as a foundational layer for next-generation business operations.

The rollout builds on recent software advances, including the introduction of Gemini 3.1 Pro, which Google says improves complex reasoning and problem-solving capabilities. The model is being made available through Vertex AI, Gemini Enterprise, and the Gemini API, giving developers broader access to build customized AI applications.

Industry data suggests the shift toward AI agents could be transformative. According to Google Cloud’s 2026 AI Agent Trends Report, autonomous agents are expected to play a central role in enterprise workflows this year, with companies like Salesforce CEO Marc Benioff signaling growing interest in interoperable systems built on emerging standards such as the Agent2Agent (A2A) protocol.

The broader competitive landscape is intensifying. Analysts argue Google is not simply launching new products but positioning itself as the infrastructure backbone for always-on AI systems. Dan Ives, Managing Director and Senior Equity Analyst at Wedbush Securities, said the company is “effectively building an operating system for enterprise AI, where the real value is controlling the compute and orchestration layer.”

That strategy comes as rivals face infrastructure constraints. Industry observers note that capacity shifts and project delays across the AI ecosystem have created openings for Google Cloud to expand its footprint, particularly in Europe and the U.K., where demand for large-scale AI compute continues to accelerate.

With Google Cloud Next 2026 running through April 24, the company is using the event to make a clear statement: the next phase of artificial intelligence will not be defined solely by models—but by the infrastructure, chips, and platforms that allow those models to operate continuously at enterprise scale.

— JBizNews Desk